Dark Web Map: Update to v2

Dark Web Map Series

I have been quiet about the Dark Web Map recently, mainly because I have been busy with other work. But now I want to tell you about a major update to the Dark Web Map: version 2! In this post, I’ll tell you what’s new, what’s changed, and the reasoning behind the development of v2.

No Embedded Images

The biggest change in Dark Web Map v2 is that I have started crawling with embedded images disabled. In the first version of Dark Web Map, I manually reviewed and redacted questionable websites. While the dark web is notorious for violent, racist, gross, and illegal multimedia, my experience while building the first Dark Web Map was that very few sites put that kind of stuff on their home page. Of course, as with anything done by human beings on a large scale, there were oversights and mistakes made. Over time, I received notifications of additional materials that needed redacting, and it was quite time-consuming to make those small redactions, regenerate the entire map, and upload all of the tiles all over again.

If this was merely a technical problem, then I might be able to optimize the process of making redactions to be more efficient. But as I prepared this update to the map—now a full year after the original release—I began to see that more and more sites display shocking and horrifying content directly on their home page. In particular, there are a lot of new sites that display child exploitation directly on their home page.

The material is too awful for words.

As a result, I made the decision to start crawling dark web sites with embedded images

disabled: i.e. not loading multimedia from <img> tags or from style sheets. Reviewing

and redacting these sites would be traumatizing, and even possessing some of these

screenshots would be illegal. On the upside, the decision to globally disable all images

increases my agility: I can make more frequent updates to the map without needing to

schedule time for a human being to review and redact screenshots. Some of the sites are

completely blank when embedded images are disabled, but most of the sites are still very

recognizable.

image/jpeg served from the root URL will still be displayed.

Not many sites host images at their root URL. I have reviewed the ones that do and will

fix this bug in the next update of the Dark Web Map.Censoring Onion Names

When I built the first version of the dark web map, I had a lot of debate with

colleagues about how exactly it should look, how it should work, and how to present the

data. One decision made late in the process—after collecting the crawl data and doing

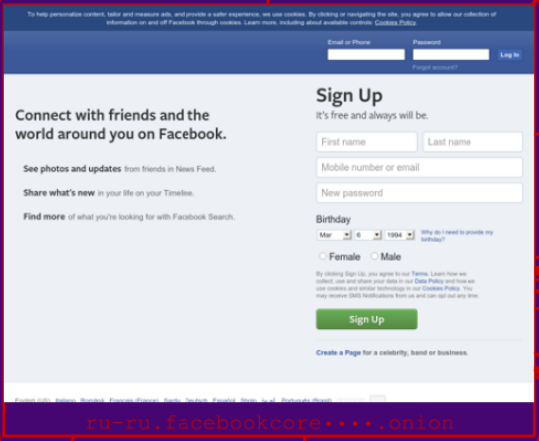

most of the pre-processing—was to mask off onion names. For example, Facebook’s onion is

facebookcorewwwi.onion, but in the v1 map it is displayed as facebookcore••••.

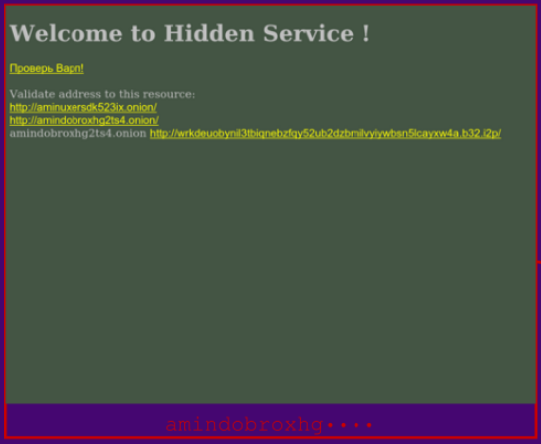

I masked off the last 4 characters because I didn’t want the map to be a directory of onion services. I didn’t want to point people towards dangerous and/or illegal dark web sites. Some sites display onion addresses (their own address or others) in the text of the page, however, leading to a situation like this:

In this example from the v1 map, the onion name is masked off in the caption at the bottom, but the screenshot shows that the page displays its own onion address! This particular example is harmless, but this obviously defeats the purpose of masking. In the v2 map, I are now masking off onions that appear in the text of the page.

In this example, notice how the onion is masked off both in the caption as well as in the screenshot of the page itself. This process isn’t perfect—it may not mask onions that are intentionally obscured—but it is a dramatic improvement, especially for onions that list links to illegal sites.

Hunchly’s Dark Web Report

Another big change is that I am now obtaining the onion data from the Hunchly Dark Web Report! Hunchly builds amazing OSINT software. As a side project, they also publish a free list of recently crawled onions in a daily report. I am using this report as a seed list for Dark Web Map v2.

As a side effect of this new data source, the map now includes v3 onions! These are the onions with really long, 56 character names. When these names appear in the map, they are abbreviated to fit into the space available:

I even had to upgrade the Tor proxy on my crawling machine because it was too old to

support v3 onions! :sheepish-grin:

This data source also gives us subdomains of onions. For example, Facebook runs internationalized versions of its onion:

The Dark Web Map now displays these subdomains, like ru-ru.facebookcorewwwi.onion, as

seen in the caption underneath this Facebook page.

Sharing Locations

In Dark Web Map v1, if you found something interesting in the map and wanted to show it to somebody else, it was very cumbersome process. You might take a few screenshots and/or provide directions about how to navigate to an item of interest.

Dark Web Map v2 makes it super easy to share specific locations in the map! Just copy and paste your current URL, and anybody else who opens up that URL will be taken directly to the same view that you are looking at.

Smaller Map

As a result of the decisions regarding images and the data source, I threw out all of crawling data from the v1 map and started from scratch. As a result, the map has shrunk from 6.6k onions in the v1 map to 3.7k onions in the v2 map.

The difference in size isn’t immediately obvious when you start navigating the map, and this tradeoff makes sense to us now, because I will be able to make more frequent updates to the map going forward, and this will eventually lead us to build bigger and bigger maps as I gather more and more historical data.